Why I trust language but I don't trust AI

Why AI is does not know things, while speaking of things. Why AI shows things, without seeing things. A sensible reasoning about ChatGPT and MidJourney.

The study of language and its underlying structure has long been a subject of fascination for linguists, philosophers, and cognitive scientists. One of the most influential figures in this field is Noam Chomsky, who argued in his 1957 book Syntactic Structures that language is fundamentally a system of rules and conventions learned and internalized by individuals. “ Grammar is best formulated as a self-contained study independent of Semantics. “

According to Chomsky, the ability to generate and understand language is not based on knowledge or experience, but rather on the innate structure of the human brain.

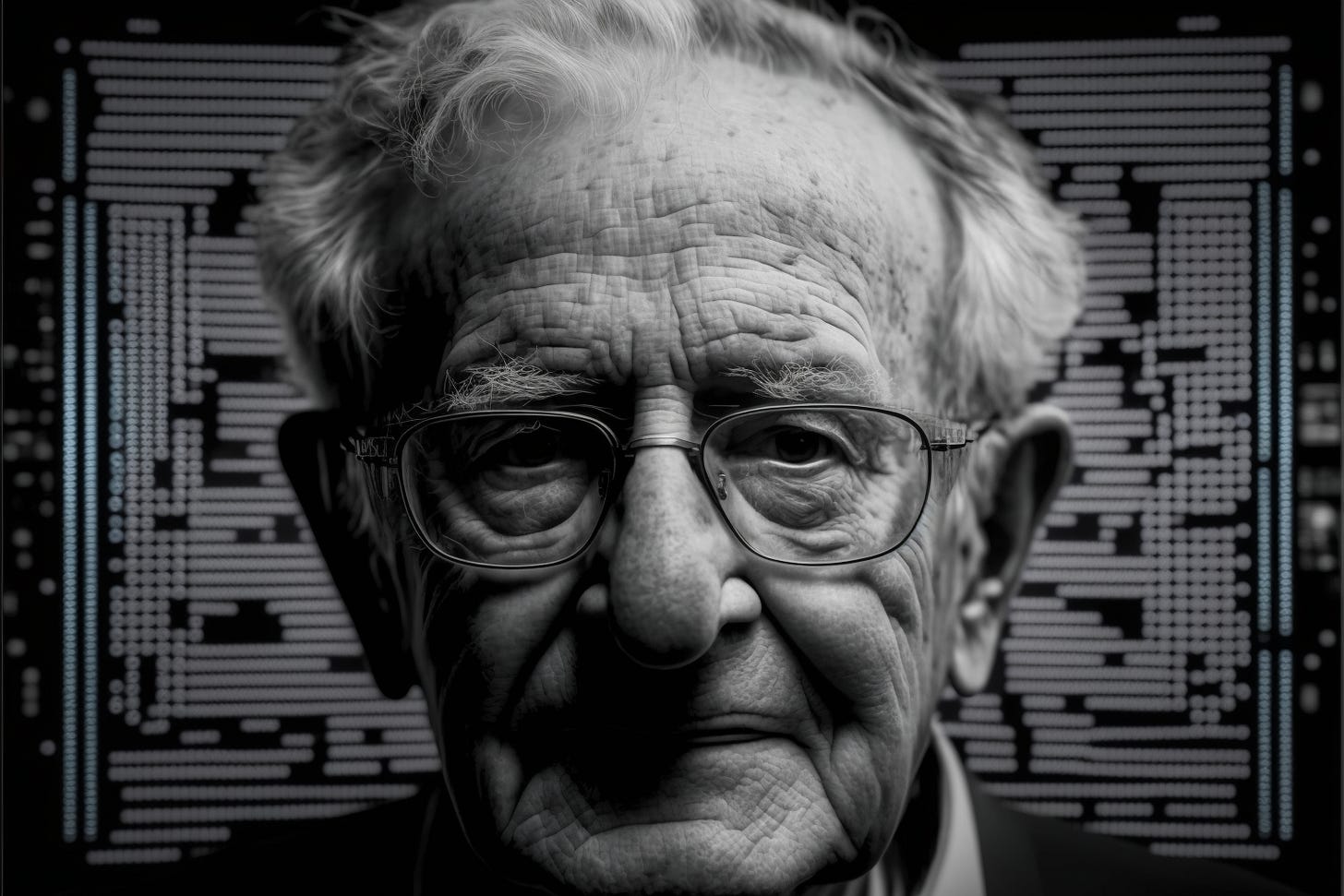

Midjourney: artificial intelligence is talking to a portrait of Noam Chomsky --ar 3:2

In general, semantics is the study of meaning in language. It is concerned with interpreting words, phrases, and sentences in context, and how they convey meaning.

Semantics is an essential aspect of language and communication because it helps us understand how words and phrases convey meaning. It also helps us understand how people use language to express their thoughts, feelings, and intentions, and how they interpret the language of others.

This theory has important implications for artificial intelligence (AI), particularly in developing conversational AI systems like Generative Pre-trained Transformers (GPT).

GPT is a type of AI designed to generate human-like text by learning from a massive dataset of human-generated text, such as books, articles, and websites. It can generate coherent and contextually appropriate text, which means that when you ask GPT a question, it can generate an answer grammatically correct and relevant to the context of the conversation.

So (we) humans use the processes of semantics to understand what AI is telling us. Still, AI has built its answer by simply mimicking the practical structure of language - grammar - through pre-trained validation.

So when machines like ChatGPT ‘talk’ to us, they use validated grammar structures processed on the semantics used to train the AI, but without a semantic approach.

It’s like drawing after something that we don’t understand: we stay focused on the similarities of the original subject to our drawing hoping that someone else, when seeing it, will recognize the subject because we can’t.

This ‘mimicking’ approach can explain a similar concept, that can be applied to text-to-image AI, which generates images based on a given text input. This is typically done using a machine learning model trained on a dataset of images and their corresponding textual descriptions. When the model is given a new text input, it uses the patterns and structures it learned from the training dataset to generate an image similar in content and style to the input text.

One of the critical features of text-to-image AI is its ability to generate coherent and contextually appropriate images. This means that when you give the AI a text description of an image, it can generate an image relevant to the description's content and context. However, it is essential to note that text-to-image AI is not actually "know" anything in the way that a human would. Instead, it generates images based on patterns and structures learned from the training dataset.

This is why the images generated by text-to-image AI are often perceived as surreal or unreal, as they may contain elements or combinations of objects and scenes that are not seen in the real world, or that are excluded from the standard ability to be perceived as accurate. One reason for this is that the same constraints and biases do not limit text-to-image AI to humans. However, text-to-image AI is not constrained by these limitations and can generate images that may seem fantastical or surreal to humans.

The study of language and its underlying structure has important implications for AI, particularly in developing conversational AI systems like GPT - as in ChatGPT and text-to-image AI - as in MidJourney

. While these systems can generate text and images that are coherent and contextually appropriate, it is crucial to understand that they are not actually "know" anything in the way that a human would. Instead, they follow the rules and conventions of language and image generation that they learned from the input dataset. This is why the text generated by GPT and the images generated by text-to-image AI are often perceived as surreal or unreal, as they may contain elements or combinations that are not found in the real world.

It is important to note that the limitations of AI systems like GPT and text-to-image AI do not diminish their potential value or usefulness. These systems can still be very effective in various applications, such as language translation, content generation, and image annotation. However, it is essential to understand these systems' limitations and use them responsibly, so that they are not used to perpetuate or amplify existing biases or misinformation.

As AI advances and becomes more sophisticated, it will be necessary for researchers, policymakers, and the general public to continue to study and understand the underlying mechanisms of these systems, and to consider the ethical and social implications of their use.